Integrating GPT29 Telemetry into Your TMS/WMS/Data Lake: Practical Patterns and Example Payloads

This post complements the GPT29 Cold Chain White Paper.

Download the PDF: GPT29 Cold Chain Tracking for Freezers — Engineering & Compliance White Paper

Integration-first mindset: own your visibility stack

Many overseas operators already have a visibility stack: dispatch/TMS, warehouse systems, claims workflows, and analytics. For these teams, a tracker is valuable when it delivers:

- Clean data contracts (consistent fields, units, and timestamps).

- Reliable ingestion across offline windows and resends.

- Actionable events (temperature excursion, exposure, shock) rather than raw points only.

GPT29 is commonly evaluated for in-transit monitoring with multi-sensor signals. Product background: GPT29 In-Transit Monitoring Device.

Pattern 1: Push-based ingestion (recommended for near real-time workflows)

In a push model, the device (or gateway service) sends telemetry to your ingestion endpoint as reports occur. Common transport choices include:

- HTTPS (REST-like posts for telemetry/event batches)

- MQTT (publish/subscribe model suitable for IoT scale)

- Webhooks (server-to-server notifications, typically used when a platform exists in between)

Push ingestion is well-suited for exception-driven reporting (higher frequency during excursions) and operational alerting.

Pattern 2: Pull-based ingestion (useful for batch reporting and audits)

In a pull model, your system periodically retrieves stored telemetry/event data from an endpoint. This can be useful for:

- daily compliance reports,

- bulk reconciliation after long offline windows,

- data warehouse ingestion jobs.

Pull models can be simpler operationally but may delay alerts. Many programs use push for alerts and pull for reporting reconciliation.

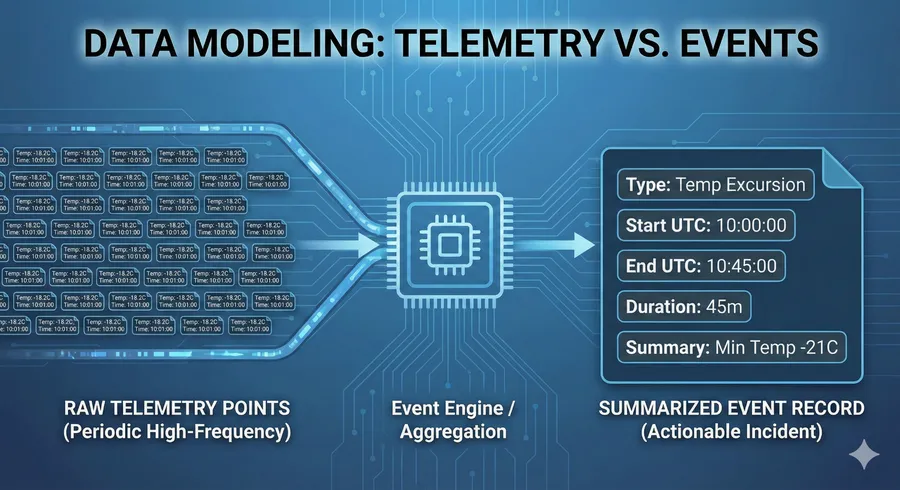

Telemetry vs. Events. Scalable integrations ingest both periodic raw data (for context) and summarized event records (for audit-ready incidents), as shown in the JSON examples.

Telemetry vs Events: model both (and keep them consistent)

The most scalable cold chain integrations store two related streams:

- Telemetry points: periodic measurements (temperature, humidity, battery, location, light level, etc.).

- Events: incident summaries with start/end semantics (temperature excursion, exposure incident, shock event).

Telemetry provides detailed context; events provide audit-ready, explainable incidents. See excursion modeling: Temperature Excursions (Threshold + Duration).

Example payload templates (conceptual)

Below are simplified templates showing field best practices: UTC timestamps, explicit units, and stable IDs. These are illustrative; adapt to your final integration contract.

Telemetry record (example)

{

"device_id": "GPT29-XXXXXX",

"sampled_at_utc": "2026-01-12T03:15:00Z",

"location": {"lat": -33.42, "lng": -70.65, "source": "gnss"},

"temperature": {"value": -18.4, "unit": "C"},

"humidity": {"value": 62.1, "unit": "%"},

"light": {"value": 0.8, "unit": "lux", "flag_exposure": false},

"motion": {"shock_flag": false, "vibration_level": "low"},

"battery": {"percent": 78},

"integrity": {"seq": 184221, "idempotency_key": "..." }

}Event record (example)

{

"device_id": "GPT29-XXXXXX",

"event_id": "evt_8b1a...",

"event_type": "temperature_excursion",

"rule_id": "temp_rule_v3",

"started_at_utc": "2026-01-12T03:14:00Z",

"ended_at_utc": "2026-01-12T04:02:00Z",

"duration_seconds": 2880,

"summary": {

"max_temp_c": -12.7,

"min_temp_c": -19.1

},

"context_flags": {

"light_exposure": true,

"shock_event": false,

"offline_gap_detected": true

},

"integrity": {"seq_start": 184200, "seq_end": 184248}

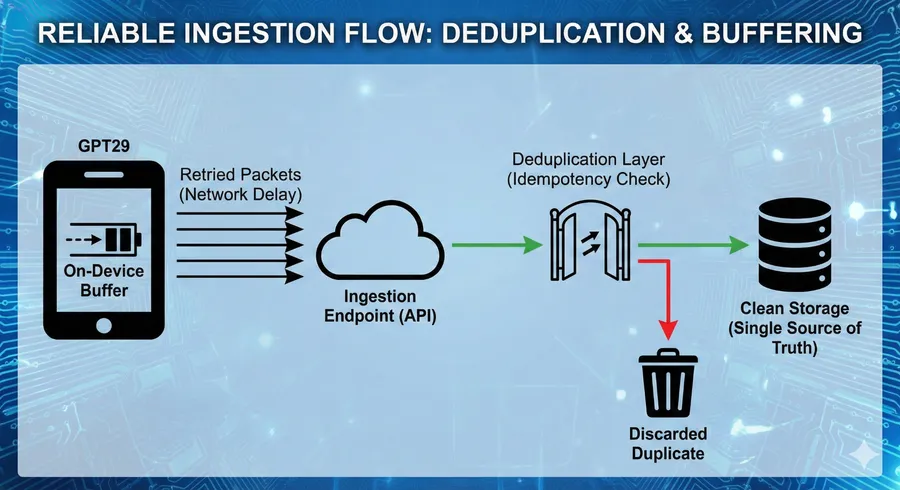

}Reliability requirements: buffering + resend + deduplication

Overseas routes include offline windows. Your ingestion system should expect replays and duplicates. Key controls include:

- Buffering on the device (store-and-forward).

- Resend logic with backoff (to protect battery).

- Deduplication via idempotency keys or sequence numbers.

Deep dive: Cold Chain IoT Data Integrity (Buffering, Resends, Idempotency).

Figure 3: Ensuring reliability. A robust ingestion pipeline handles on-device buffering and uses idempotency checks at the gateway to discard duplicates caused by network retries.

Storage and retention (engineering guidance)

Choose storage based on your query patterns:

- Time-series queries (temperature over time): consider time-partitioned tables or time-series optimized storage.

- Incident investigations: index events by device, trip, and time window.

- Audit retention: define retention windows consistent with your compliance program and claims timelines.

Consider storing “trip metadata” (shipment ID, lane, container ID, customer) so you can join telemetry to business context.

Security considerations (baseline guidance)

International telemetry systems should implement a baseline security posture:

- Transport security (TLS),

- Authentication (device credentials or token-based access),

- Access control (least privilege for ingestion endpoints),

- Audit logging for configuration changes and rule updates.

Security design should align to your organization’s policies and threat model.

Recommended next steps

If you want to integrate GPT29 telemetry into your platform, start with a pilot that validates: data contract stability, dedupe behavior, timestamp consistency, and excursion/event quality.

Download (PDF): GPT29 Cold Chain Tracking for Freezers — Engineering & Compliance White Paper

Contact: Contact Us or email [email protected].

Related solution pages: Supply Chain Visibility Solutions and Container GPS Tracking Device for Cargo & Shipment.

FAQ

Should I store every raw sensor sample?

Not always. Many programs store periodic telemetry plus event summaries. Store high-resolution data selectively during incidents if required.

What is the most common failure in integrations?

Inconsistent timestamps and duplicate ingestion. Standardize on UTC, enforce idempotency/deduplication at ingestion, and validate via a pilot.